Perception tool calling works with OpenAI’s Function Calling and can be configured in theDocumentation Index

Fetch the complete documentation index at: https://docs.tavus.io/llms.txt

Use this file to discover all available pages before exploring further.

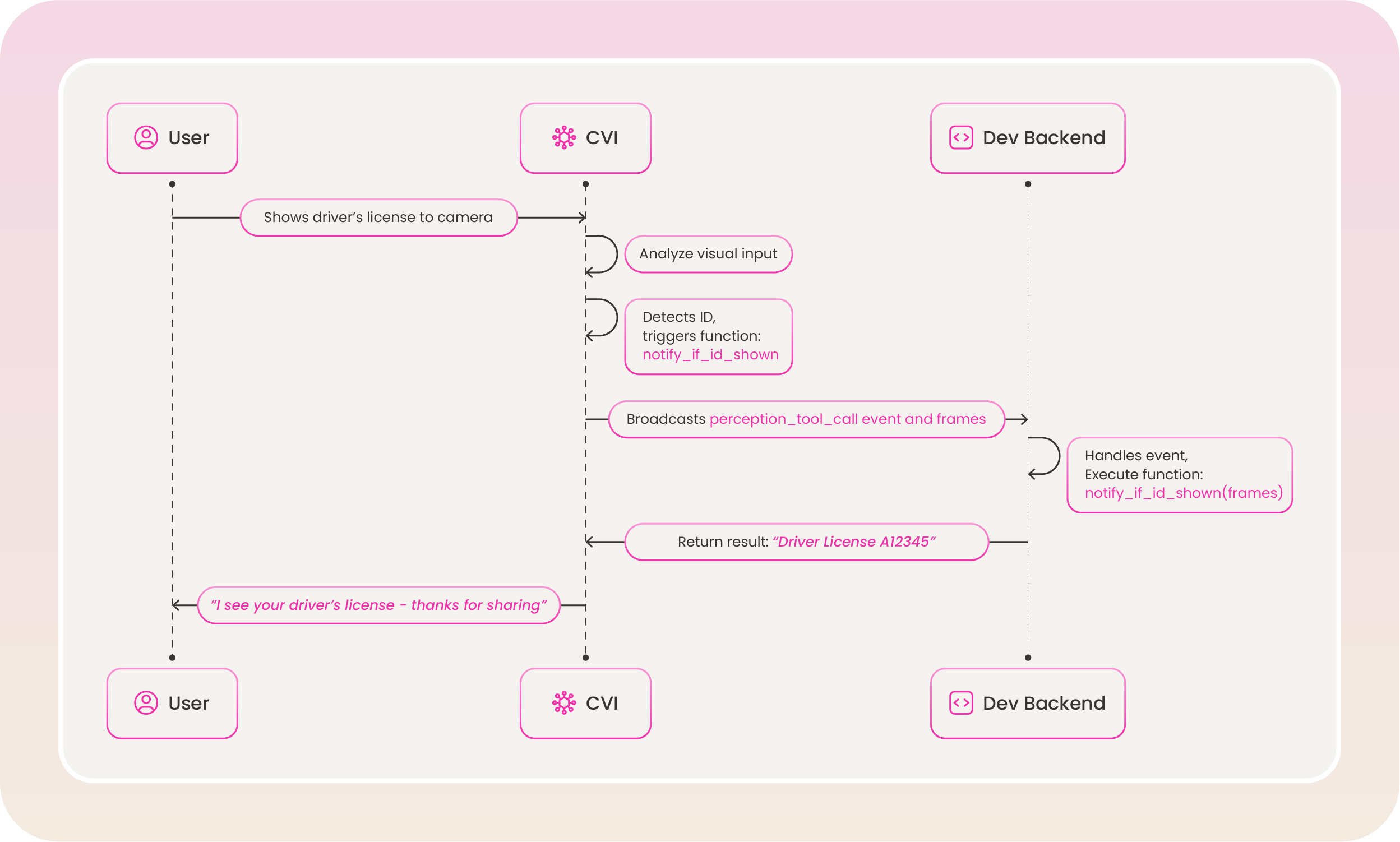

perception layer. It allows an AI agent to trigger functions based on visual or audio cues during a conversation.

You define two separate tool sets in the perception layer:

- Visual tools —

visual_tool_promptandvisual_tools: triggered when Raven detects something in the video stream (e.g., an ID card, bright outfit, hat). - Audio tools —

audio_tool_promptandaudio_tools: triggered when Raven detects something in the audio stream (e.g., sarcasm, frustration).

The perception layer tool calling is only available for Raven.

Tavus does not execute tool calls on the backend. Use event listeners in your frontend to listen for perception tool call events and run your own logic when a tool is invoked. Each event includes a

modality field ("vision" or "audio") so you can handle visual and audio tool calls differently.Defining Tool

Top-Level Fields

| Field | Type | Required | Description |

|---|---|---|---|

type | string | ✅ | Must be "function" to enable tool calling. |

function | object | ✅ | Defines the function that can be called by the model. Contains metadata and a strict schema for arguments. |

function

| Field | Type | Required | Description |

|---|---|---|---|

name | string | ✅ | A unique identifier for the function. Must be in snake_case. The model uses this to refer to the function when calling it. |

description | string | ✅ | A natural language explanation of what the function does. Helps the perception model decide when to call it. |

parameters | object | ✅ | A JSON Schema object that describes the expected structure of the function’s input arguments. |

function.parameters

| Field | Type | Required | Description |

|---|---|---|---|

type | string | ✅ | Always "object". Indicates the expected input is a structured object. |

properties | object | ✅ | Defines each expected parameter and its corresponding type, constraints, and description. |

required | array of strings | ✅ | Specifies which parameters are mandatory for the function to execute. |

Each parameter should be included in the required list, even if they might seem optional in your code.

function.parameters.properties

Each key inside properties defines a single parameter the model must supply when calling the function.

| Field | Type | Required | Description |

|---|---|---|---|

<parameter_name> | object | ✅ | Each key is a named parameter. The value is a schema for that parameter. |

| Subfield | Type | Required | Description |

|---|---|---|---|

type | string | ✅ | Data type (e.g., string, number, boolean). |

description | string | ❌ | Explains what the parameter represents and how it should be used. |

maxLength | number | ❌ | Maximum character length for string parameters. Must not exceed 1,000. |

enum | array | ❌ | Defines a strict list of allowed values for this parameter. Useful for categorical choices. |

Example Configuration

Here are examples of tool calling in theperception layer. Visual tools use visual_tool_prompt and visual_tools; audio tools use audio_tool_prompt and audio_tools. See Perception for full setup details.

Visual tools example

Perception Layer — visual tools

Audio tools example

Perception Layer — audio tools

How Perception Tool Calling Works

Perception tool calling is triggered during an active conversation when the perception model detects a cue that matches a defined function:- Visual tools are triggered by what Raven sees (e.g., ID card, bright outfit, hat). The event includes a

modalityof"vision", structuredarguments, and aframesarray of base64-encoded images that triggered the call. - Audio tools are triggered by what Raven hears (e.g., sarcasm, frustration). The event includes a

modalityof"audio"andarguments(often a JSON string).

The same process applies to any function you define in

visual_tools or audio_tools—e.g. notify_if_bright_outfit_shown when a bright outfit is visually detected, or notify_sarcasm_detected when sarcasm is detected in speech.

Modify Existing Tools

You can updatevisual_tools or audio_tools using the Update Persona API. Use the path /layers/perception/visual_tools or /layers/perception/audio_tools as appropriate.

Replace

<api_key> with your actual API key. You can generate one in the Developer Portal.